Tim Dettmers on Twitter: "Updated GPU recommendations for the new Ampere RTX 30 series are live! Performance benchmarks, architecture details, Q&A of frequently asked questions, and detailed explanations of how GPUs and

GPU performance over time. Limitations in the physics of semiconductors... | Download Scientific Diagram

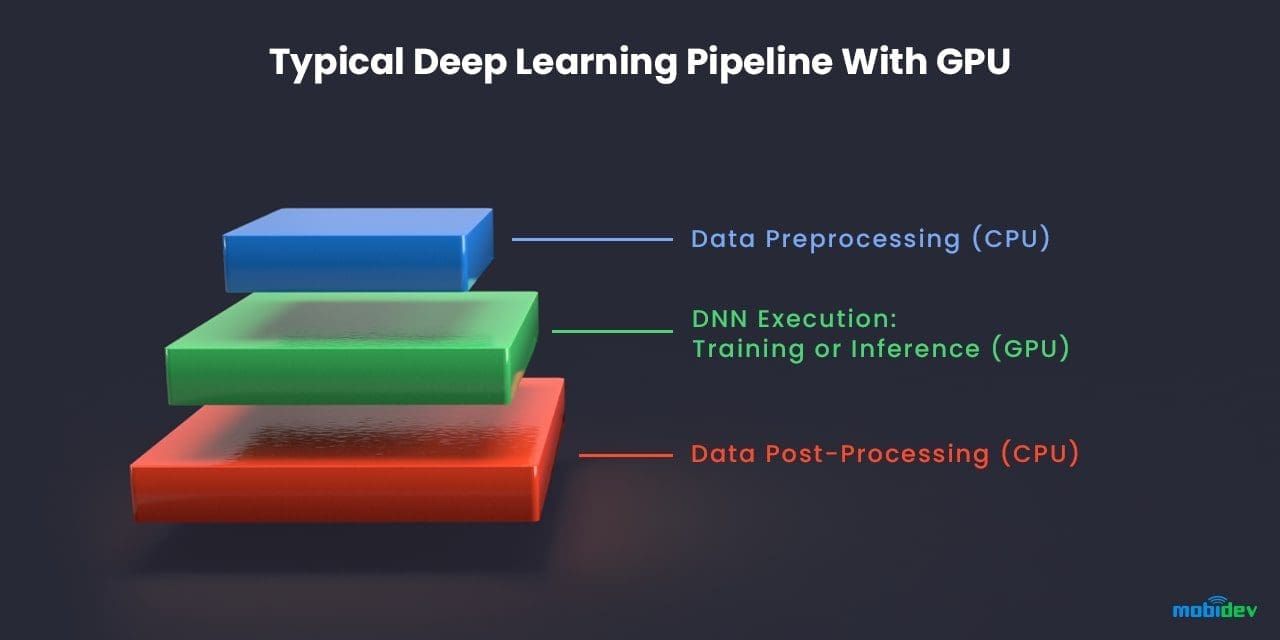

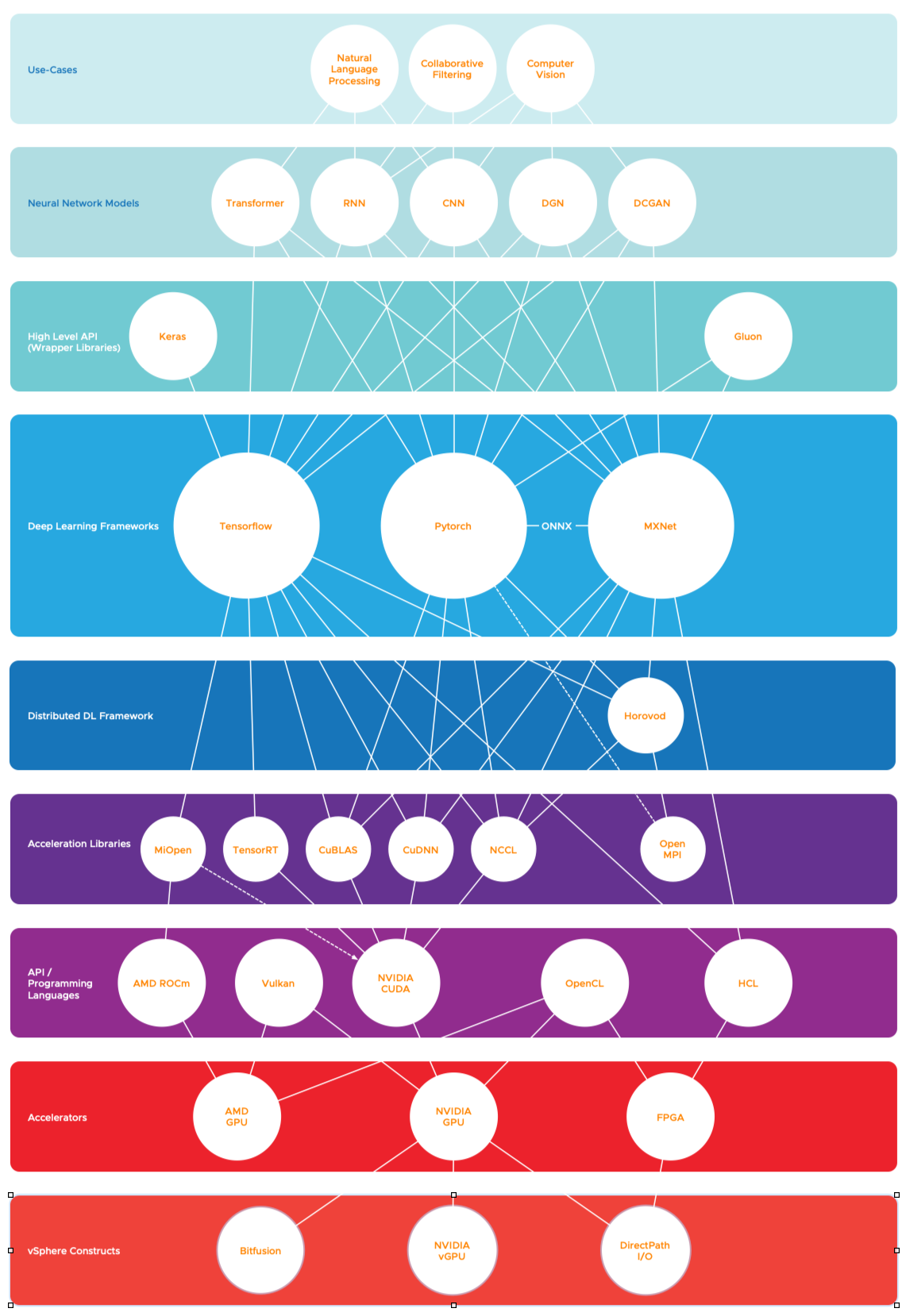

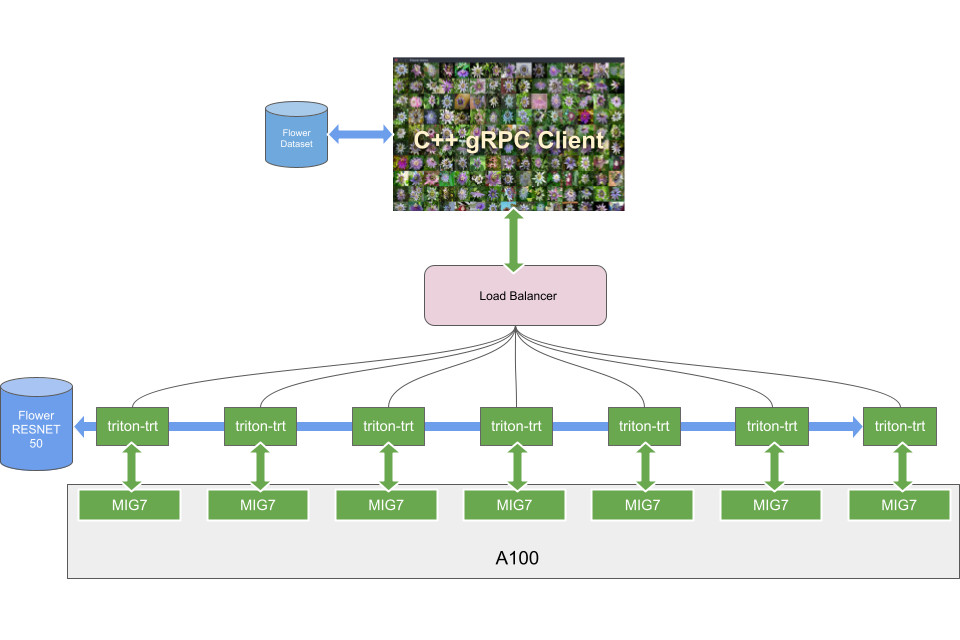

GTC 2020: Accelerate and Autoscale Deep Learning Inference on GPUs with KFServing | NVIDIA Developer

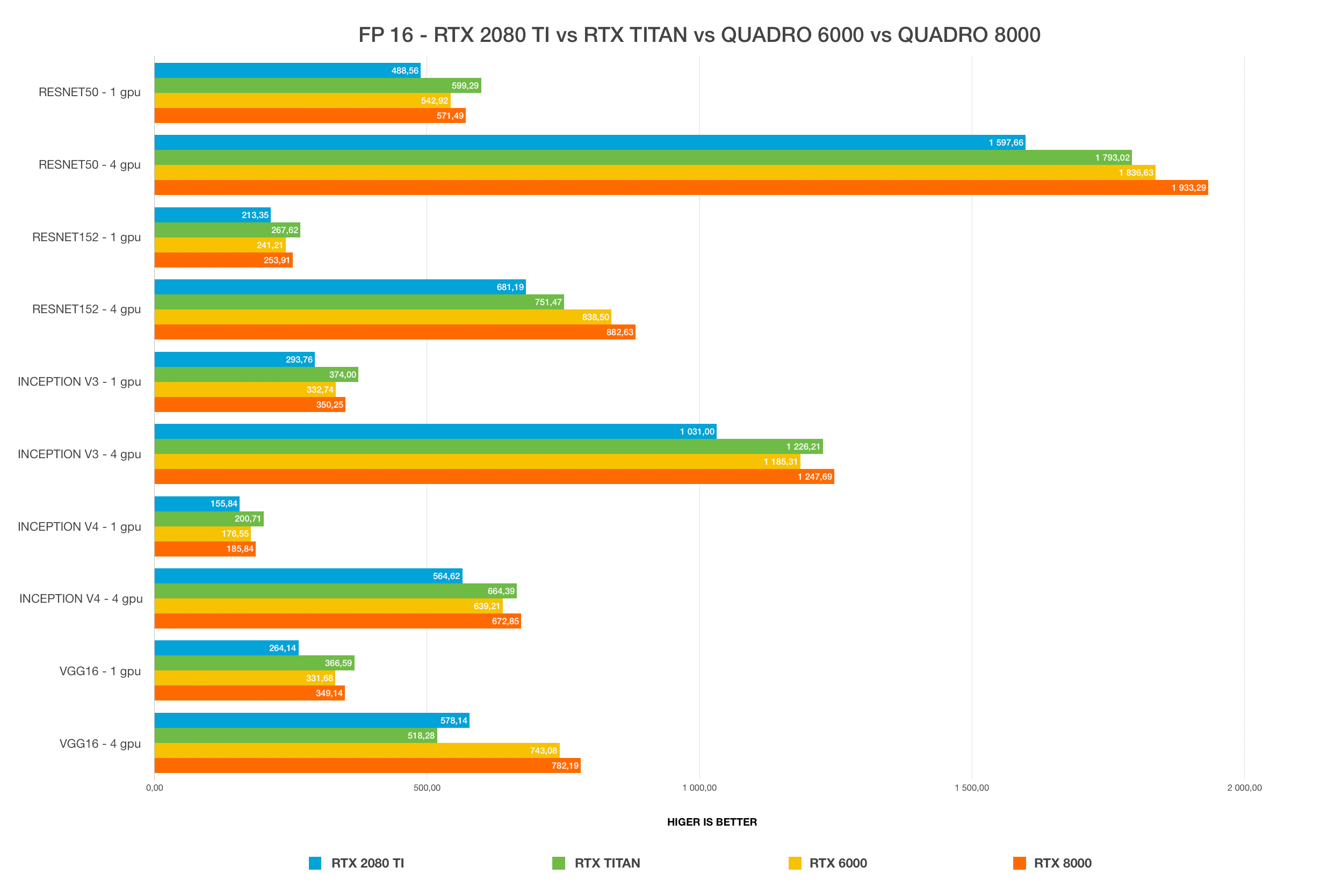

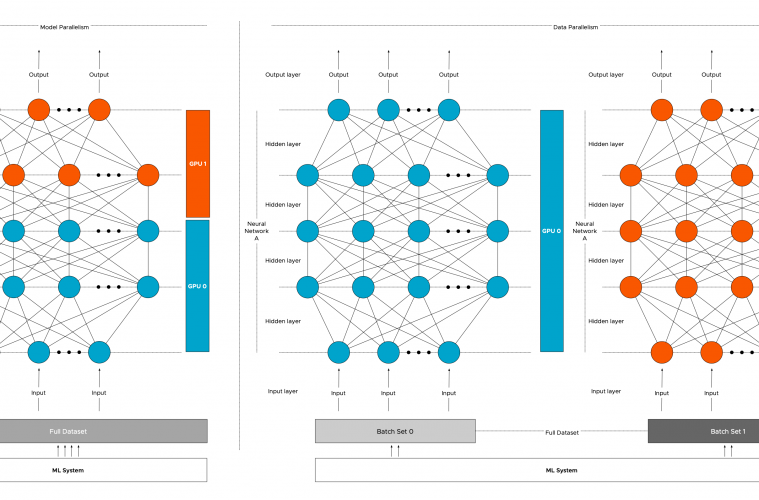

How to Select the Best GPU for Deep Learning In 2020 | Deep learning, Best gpu, Artificial neural network

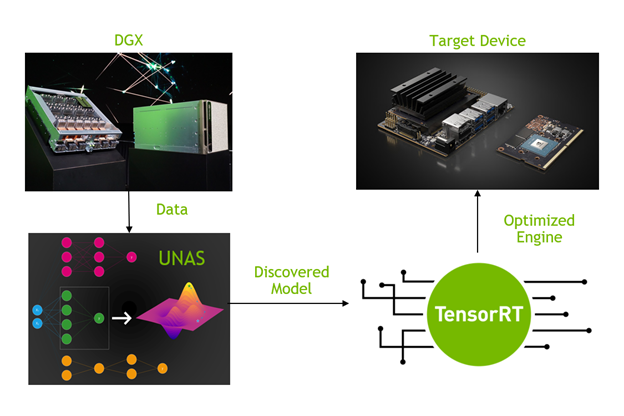

Discovering GPU-friendly Deep Neural Networks with Unified Neural Architecture Search | NVIDIA Technical Blog